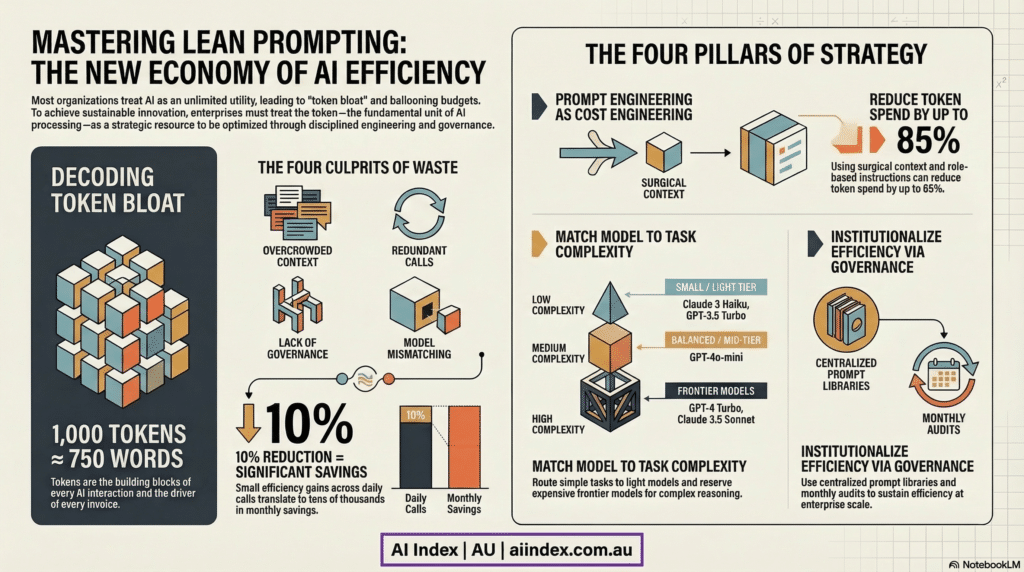

The Lean Prompting Handbook serves as a strategic manual for corporations looking to scale their AI operations while maintaining strict fiscal responsibility. The text argues that businesses must transition from unmonitored experimentation to a disciplined “New Economy of Intelligence” by mastering token efficiency.

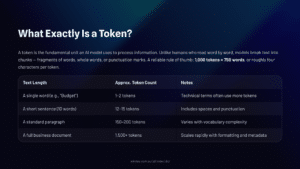

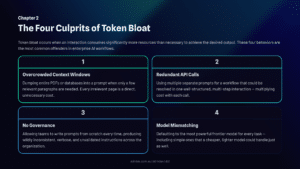

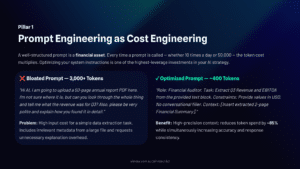

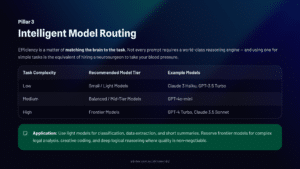

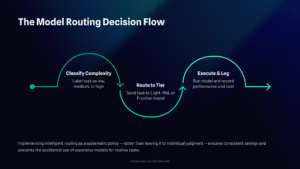

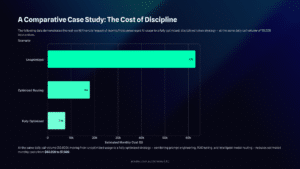

It identifies common causes of financial waste, such as overcrowded context windows and redundant requests, offering practical solutions like multi-step prompting and intelligent model routing.

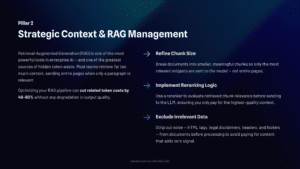

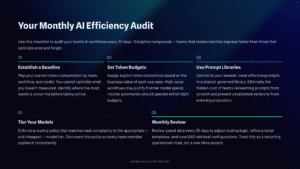

By optimizing RAG management and using standardized prompt libraries, organizations can achieve superior results at a fraction of the traditional cost. Ultimately, the guide provides a reproducible framework and audit checklist to ensure that AI growth does not lead to unsustainable budget inflation.

Explore strategies to optimize AI token usage, reduce costs, and scale enterprise AI with prompt engineering, RAG management, and intelligent model routing for sustainable AI deployment.

Images Copyright AIIndex.com.au

Download the Deck Here The-Lean-Prompting-Handbook